Not long ago, the idea that a perfectly realistic video of someone could be fabricated from nothing belonged in science fiction.

Now it belongs on the internet.

Deepfake technology allows anyone with modest technical skill to create convincing audio or video of a person saying or doing something that never happened. The results can be unsettlingly realistic. A politician giving a speech they never gave. A celebrity endorsing a product they have never heard of. A private individual appearing in a video they never consented to.

And the technology is improving at a speed that makes lawyers deeply uncomfortable.

Because the law was not built for a world where reality itself can be manufactured.

Deepfakes are already creating legal chaos. The question now is not whether lawsuits are coming. The question is how many.

Consider the simplest scenario. A company releases a social media ad showing a famous actor enthusiastically promoting a product. The actor never filmed the ad. The company simply generated the performance using artificial intelligence.

From a marketing perspective, the temptation is obvious.

From a legal perspective, it is a lawsuit waiting to happen.

Celebrities have long protected their likeness through what is known as the right of publicity. This legal doctrine allows individuals to control the commercial use of their identity. If a company profits from someone’s name, image, or voice without permission, the injured party can sue.

Deepfakes threaten to turn that right into a daily battleground.

A fake endorsement can spread across the internet in minutes. By the time lawyers become involved, the damage to reputation or brand value may already be done. The legal system, which moves at the speed of motions and hearings, is suddenly trying to chase something that moves at the speed of algorithms.

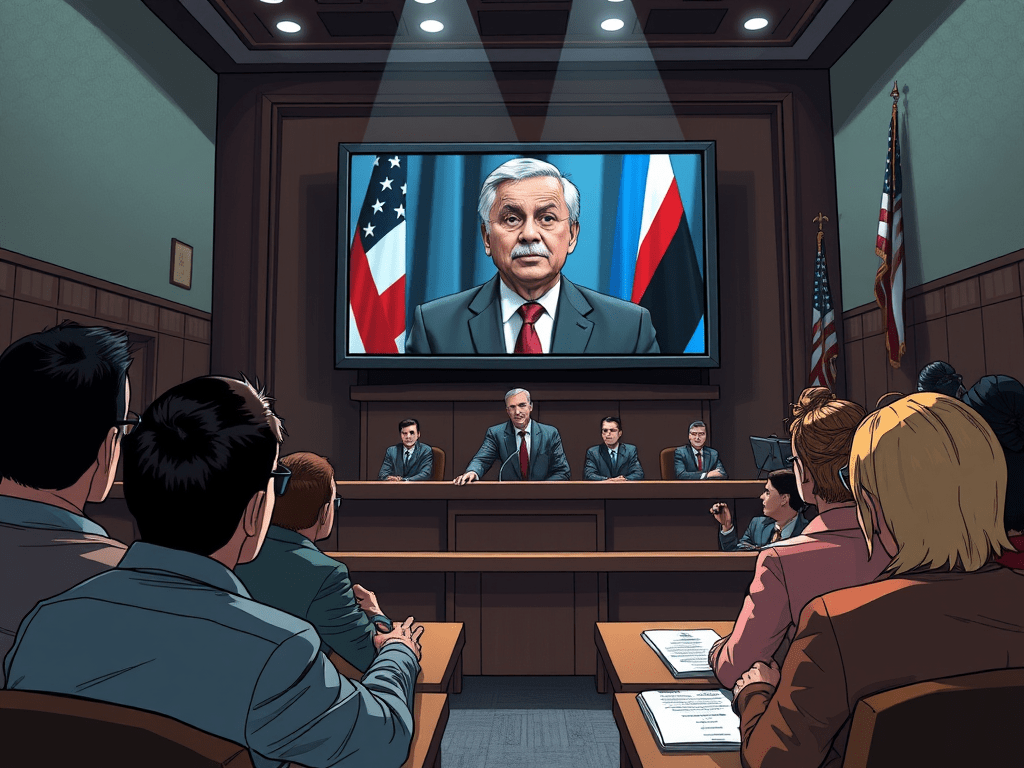

Then there are political deepfakes.

Imagine a video appearing days before an election that shows a candidate making a shocking statement. The video looks authentic. The voice matches perfectly. The facial movements are indistinguishable from real footage.

Within hours it spreads across social media.

Within days it may influence public opinion.

Even if the video is eventually proven fake, the damage cannot always be undone. The law has tools to address defamation and election interference, but those tools were designed for false statements made by identifiable speakers. Deepfakes complicate that equation. The creator may be anonymous, located in another country, or operating through layers of online accounts.

The legal question becomes not only whether the content is unlawful but whether anyone can realistically be held responsible.

Perhaps the most disturbing use of deepfake technology involves what are commonly called revenge deepfakes. These are fabricated intimate images or videos created without a person’s consent. Victims suddenly find themselves depicted in explicit material that never occurred.

For years, the law struggled even with real nonconsensual images. Legislatures gradually passed laws targeting what became known as revenge pornography. Deepfakes introduce a new twist. The images are not stolen. They are invented.

Traditional defamation law requires proof that a false statement harmed a person’s reputation. But what happens when the falsehood is visual rather than verbal? When the lie appears not as a sentence but as a video that seems entirely real?

Courts are only beginning to wrestle with these questions.

At the heart of the problem is a simple fact. The legal system is designed to determine truth after harm occurs. It examines evidence, hears witnesses, and issues judgments months or years later.

Deepfakes, by contrast, operate in a world where harm spreads instantly.

A fake video can reach millions of people before the first lawyer finishes drafting a complaint. Even if a court eventually rules in favor of the victim, the reputational damage may already be permanent.

This creates a strange new legal landscape. The law still recognizes concepts like defamation, fraud, and misappropriation of likeness. Those doctrines still exist. They still apply.

But they were written for a world where fabricating convincing video evidence required a Hollywood studio.

Now it requires a laptop.

Some governments have begun to respond. New laws are emerging that specifically target synthetic media. Platforms are experimenting with watermarking systems designed to identify AI generated content. Courts are exploring how existing doctrines might stretch to cover these situations.

But the technology continues to evolve faster than the rules governing it.

Which leads to the uncomfortable truth.

The coming wave of deepfake lawsuits will not simply test individual cases. It will test the legal system itself.

For centuries, the law has relied on a basic assumption. That photographs and videos are evidence of something that actually happened.

Deepfakes quietly remove that assumption.

And when reality itself becomes uncertain, the courtroom becomes a much more complicated place.